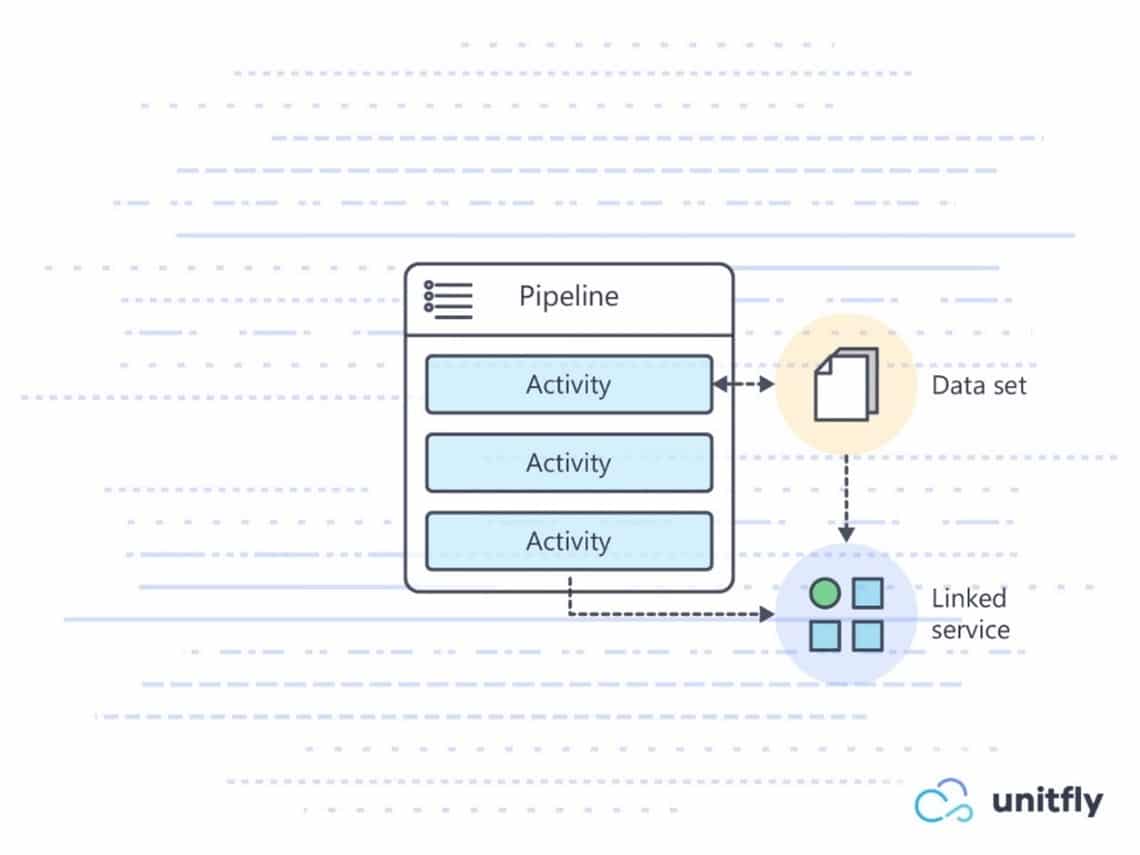

The Pipeline will look as follows:īEGIN TRANSACTION - both changes to Fact.SalesData and truncation of staging data executed as one atomic transaction To copy data from one Azure SQLĭatabase to another we will need a copy data activity followed by stored procedure It comes to 100% Azure or hybrid infrastructures). Point of view this is messy, and I recommend using the Copy Data transform when Procedure by adding a "linked server" to your instance, but from an architectural (It is possible to extend the scope of a stored Solution with SQL Server Stored ProceduresĪzure data factory has an activity to run stored procedures in the Azure SQLĭatabase engine or Microsoft SQL Server. Selecting "Allow insert" and "Allow update" to get data syncedīetween the source and destination using HashId. Firstĭefine the HashId column as the key column and continue with the configuration by We also need to setup update methods on our sink. Records that have equal HashIds and insert new records where HashId has no match To allow data to flow smoothly between the source and destination it will update If you need more information on how to create and run Data Flows in ADF this tipĬreate a Source for bdo.view_source_data and Sink (Destination) for stg.SalesData. Open adf-010 resource and choose "Author & Monitor". This is an all Azure alternative where Dataflows are powered by Data Bricks IR Will be a hash value identity column (in SalesData table it is HashId) using SHA512Īlgorithm. Is somewhat unpractical and IO intensive for SQL database. This poses a challenge for ETLĭevelopers to keep track of such changes.Īs there are so many identity columns using a join transformation to locate records In some cases, due to currency exchange rateĭifferences between sales date and conversion date. Like: quantity, unit price, discount, total are updated after the initial order In real world terms, this will be applicable to scenarios where some order details OrderDateTime, ProductName, ProductCode, Color and Size. Tables by uniquely identifying every record using the following attributes: SalesOrderID, The purpose of the ETL will be to keep track of changes between two database ) - This Table should be truncated after each upload Staging area to Copy data to without transformation NOT NULL – keeping track of changesĬREATE TABLE. ON Orders.SalesOrderID = OrderHeader.SalesOrderIDĭestination table definition in dwh database: INNER JOIN SalesLT.SalesOrderHeader AS OrderHeader INNER JOIN SalesLT.SalesOrderDetail AS Orders

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed